The following is a deep dive into Filegraph, an OS for knowledge work & creative exploration. We’ll cover the architecture, what worked, what didn’t, and why personal-scale semantic graphs might finally make linked data practical.

30-second version

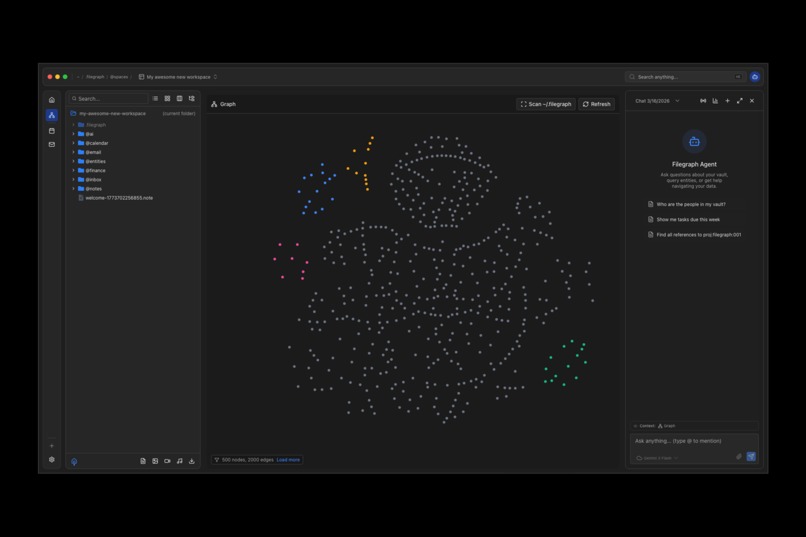

Filegraph turns your filesystem into a queryable knowledge graph. Files are nodes, relationships are edges, and a local AI agent can reason over the whole thing. Your data stays on your machine as plain files - backup is just copying a folder.

The Problem

Your knowledge is scattered. Notes in Obsidian. Docs in Notion. Code in VSCode. Tasks in Linear. Calendar in Google. Each tool excels at its domain - but they don’t talk to each other. When you need to answer “What projects is Tyler working on?” you’re opening five apps, searching five times, and manually connecting the dots in your head.

This isn’t just inconvenient - it’s a fundamental limitation of how we build personal software. Tools treat data as isolated silos. The connections between them exist only in your memory.

LLMs promised to change this. But ChatGPT doesn’t know Tyler. It can’t read your files. RAG helps - but RAG retrieves text, not relationships. The AI finds relevant documents and summarizes them. It doesn’t know that Tyler works for TechStart Inc., which is the client for the Chinese Learning App project, which has a milestone due next week. That structure exists in your data, but current AI tools can’t see it.

The semantic web had the right idea in 2001 - data with meaning, linked and queryable by machines. It gave us RDF, JSON-LD, and SPARQL. But it failed because it required global coordination. Every website would need to publish semantic data. The bootstrapping problem was insurmountable.

The insight: maybe the semantic web was right, just at the wrong scale. Not the entire internet - your personal data. Not global coordination - local extraction. The coordination problem becomes tractable when it’s just one user, one vault, one evolving ontology.

Three converging trends make this practical right now:

- Local-first software: users want data ownership. SQLite proves databases can be files. CRDTs solve sync.

- AI capabilities: LLMs can extract structure from unstructured text. Entity extraction is now a commodity.

- Datalog’s renaissance: Datomic showed that Datalog - a declarative query language from the 1980s - is perfect for modern apps. DataScript, XTDB, and others are bringing it to new platforms.

The pieces exist. No one has assembled them into a coherent system for personal computing.

What Filegraph Is

Filegraph is a local-first semantic graph indexed from the filesystem, with a Datalog-inspired query language and an AI agent that reasons over inspectable structure.

This isn’t another second-brain note-taking app. It’s a different model for personal computing: your data as a queryable graph you own, with an AI that can explain its reasoning.

A few non-obvious insights emerged through building and using it:

- Everything is a node - files, entities, relationships, memories, even agent actions. This uniform model eliminates the “files vs. database” distinction. Files are the database.

- Files as persistence, graph as index - the filesystem is the source of truth. The graph is a materialized view, rebuilt from files on startup. Backup is

cp -r. Version control isgit. Editing is any text editor. - Inspectable AI reasoning - when the agent answers “Tyler is working on the Atlas project,” it’s not generating text. It’s traversing the graph. You can ask “Why?” and see the query path:

person:tyler → worksOn → project:atlas. This transparency builds trust in a way RAG fundamentally can’t match. - Emergent schema - you don’t define a schema upfront. The AI extracts entities from your data. The schema emerges from what’s actually there.

What Filegraph is not (intentionally):

- A replacement for your filesystem - it indexes folders and files, it doesn’t introduce a new storage layer

- A cloud service - the core system runs locally with local AI

- A full semantic-web implementation - the focus is pragmatic JSON-LD + graph traversal, not OWL-complete inference

How It Works

The vault

Everything lives in ~/.filegraph - just a folder. Two file formats matter:

.data- structured entity collections (JSON-LD shaped).note- rich-text notes (TipTap block structure)

Entities use human-readable IDs: person:sarah:001, proj:website-redesign:001, ms:discovery:001. These are readable in prose, safe to type, stable across refactors, and parseable by tooling. A namespace registry maps each prefix to its canonical file - person:* lives in @entities/people.data, proj:* in @entities/projects.data.

The graph model

Internally, Filegraph maintains an in-memory EAV store (Entity–Attribute–Value) plus a link index.

A fact is an attribute-value pair on an entity:

{ e: "file:…", a: "name", v: "README.md" }

{ e: "file:…", a: "modified", v: 1734834123456 }

A link is a directed edge between two entities:

{ e1: "file:parent", a: "fs:contains", e2: "file:child" }

The filesystem indexer walks the vault, assigns stable UUIDs to files, and builds containment links. A reference indexer parses file contents for wikilinks and entity IDs, adding backlink edges. Both run incrementally on filesystem events.

The agent

The agent doesn’t answer from vibes - it issues tool calls that read from and compute over the vault. Every tool call is explicit (name + arguments). Every result is structured data that can be shown and debugged.

Example: “Who is on the Website Redesign Project team?”

If the answer is wrong, you can inspect exactly which tool returned the wrong fact.

Example: “What tasks is Marcus assigned to?”

1. resolve_entity({ name: "Marcus", namespace: "person" }) → person:marcus:001

2. query_graph({ operation: "find_by_attribute", attribute: "assignee", value: "person:marcus:001", namespace: "task" })

Two composable steps. No RAG, no hallucination, no black box.

Positioning

| Filegraph | Obsidian/Logseq | Neo4j/Neptune | RAG systems | |

|---|---|---|---|---|

| Primitive | facts + links | pages + backlinks | nodes + edges | text chunks |

| Storage | plain files | plain files | database | vector store |

| Local-first | ✓ | ✓ | ✗ | ✗ |

| Queryable structure | ✓ | limited | ✓ | ✗ |

| Inspectable reasoning | ✓ | ✗ | ✗ | ✗ |

The Live Agent Integration

For the Gemini Live Agent Challenge, we built the missing interaction layer: a voice-driven AI that doesn’t just answer questions about the workspace - it acts on it.

Build games

Design websites

Generate images & slide decks

Press ⌘⇧L - a pulsing audio orb appears, streaming your mic directly to Gemini Live over WebSocket. The agent has access to 50+ tools spanning vault, canvas, shell, calendar, UI, media, system, memory, search, and widgets.

Key technical decisions:

- Direct client WebSocket - the browser connects to Gemini Live for lowest latency. No proxy server.

- Ephemeral tokens - API keys never touch the frontend. The Rust backend provisions short-lived tokens via a Tauri command.

- Tool bridge pattern - all 50+ tools defined in OpenAI function-calling schema are automatically converted to Gemini

functionDeclarations. Zero duplication. Every tool works identically in text chat (OpenAI/Ollama/Anthropic) and voice mode (Gemini Live). - System context injection - before each session, the agent receives a snapshot of current UI state (active app, open files, canvas nodes, viewport) so it can make contextual decisions.

- AudioWorklet processing - mic capture runs in a dedicated audio thread, ensuring zero UI jank during streaming.

The result: voice command → tool execution → UI update fast enough to feel like direct manipulation. You can interrupt mid-sentence (VAD), switch tasks, or type text during a live session - voice and text coexist seamlessly.

Takeaways

- Everything is a node - once you see files, entities, memories, and agent actions as the same primitive, the architecture simplifies dramatically. There’s no impedance mismatch, no translation layer where bugs hide.

- Files as persistence, graph as index - inverting the typical model (database as truth, files as export) enables radical simplicity. The mental model matches the storage model.

- Inspectable AI reasoning builds trust - this is the one we’d most want people to internalize. When the agent’s answer is a graph traversal you can examine, not generated text you have to trust, the relationship between user and AI fundamentally changes.

- Emergent schema beats schema-first - defining a schema upfront fights how knowledge actually accumulates. Letting structure emerge from what’s there, then formalizing it incrementally, is more aligned with real usage.

- Audio UX is a different discipline entirely - with text you can scan and re-read. With voice, timing, interruption handling, and real-time feedback are the product. The pulsing orb and VAD state machine aren’t polish - they’re what make it feel like a conversation instead of a command line.

What’s Next

Filegraph’s north star is a single app that makes Obsidian, Notion, and VSCode redundant - a local-first semantic workspace where every piece of your knowledge is connected, queryable, and voice-navigable.

The longer-term bet is Filegraph’s underlying architecture as a BaaS: extracting the Filegraph runtime into a reusable local-first semantic backend - storage + graph engine + query + provenance + agent substrate - that any developer can build on top of. Think PocketBase, but for knowledge-graph applications. Planned extensions include Google Cloud Run deployment for teams, and a mobile voice companion that controls your desktop vault via Gemini Live API relay.

For a deeper dive into the architecture, ontology design, implementation details, and the full related work survey - a separate technical writeup will be published soon.

Filegraph is available for download on Mac, Windows, and Linux. Download the latest releases here:

This project is still brewing!More updates coming soon…

*Built for the Gemini Live Agent Challenge